10 Best ECM Software for 2026

Prevent sensitive data leaks with AI confidentiality. Secure API, private LLMs, and ECM document protection can protects your company’s data.

Did you know 99% of organizations have exposed sensitive data to AI tools?

Everytime you ask ChatGPT or Claude “summarize this”, “review that” you’re leaking your data.

AI confidentiality through secure platforms and API keys is the key to protect your organization (and its reputation!), especially in ECM platforms.

AI confidentiality is the strategy and features used within a DMS or ECM platform to protect sensitive data and avoid security breaches, especially when using cloud-based document management. AI confidentiality ensures that AI models process enterprise data without misusing sensitive information.

In other words, confidential data remains protected from human users but also from the AI systems themselves, even as these systems automate critical tasks like classification, tagging, and workflow management.

To achieve AI confidentiality, ECM systems have several features and strategies in place, including:

AI workloads are carried out in isolated environments to prevent unauthorized data access.

AI models process data while maintaining end-to-end encryption, ensuring that sensitive content remains protected in transit and at rest.

Only verified AI tools and trained models can access specific datasets, minimizing exposure risk.

Confidential AI ensures enterprise data remains protected even while being analyzed by AI engines.

For example, a document that contains proprietary intellectual property can be processed for classification or workflow automation with minimal risk when proper security controls and policies are applied.

Without proper AI confidentiality measures, enterprise documents within ECM platforms are exposed to a wide range of risks.

Sensitive information may be stored or cached inadvertently during AI processing, creating opportunities for data leakage.

Improperly configured AI tools could also access documents beyond their intended scope, while employees or contractors might exploit AI systems to gain unauthorized access, increasing the threat of insider misuse.

In addition, mishandling sensitive content can lead to regulatory non-compliance, potentially violating standards such as GDPR, HIPAA, SOC 2, or ISO 27001.

Another common concern is shadow AI usage, where employees leverage unsanctioned AI applications that could unintentionally expose confidential enterprise documents outside the secure environment of the ECM.

APIs (Application Programming Interfaces) allow you to integrate AI models directly into your company’s software, and therefore offer a stronger control over data privacy, costs, and functionality.

What happens when you rely on public AI chatbots instead of APIs?

When you use public chatbots during your workday, sometimes you’ll be asking for requests such as “summarize this document”, “fix grammar”, “respond to this email”.

And although it seems innocent enough, interactions may be logged or used to improve models unless enterprise privacy settings are applied.

Public AI chatbots such as OpenAI, Anthropic, or Gemini, for instance, typically offer a simple subscription or free model. In many cases, interactions with these tools may be logged and used to improve the underlying LLMs.

Because of this, information entered into public chat interfaces should generally not be considered confidential.

If you’re using these chatbots on a more professional level, you should consider using paid API services that provide stronger privacy controls and configurable data-retention policies.

Instead of a subscription-based chatbot, API access works through pay per request model using tokens. These tokens represent units of text processed by the AI model.

So each API request will have a small cost (usually a couple of cents).

API interactions generally do not involve human review, and enterprise configurations can prevent data from being used to train models.

This approach provides greater control, cost transparency, and stronger confidentiality protections.

Accessing AI models through APIs requires the use of an API key, which acts as a secure credential similar to a password.

After creating an account with a provider such as Anthropic, you can purchase tokens (for example, a prepaid balance) and generate an API key.

The API key allows internal software systems to connect securely to the AI service. Once connected, the AI can perform document summary, document review, data analysis, workflow automation, and other similar tasks.

Because the API connection occurs directly between the organization’s software and the AI service, it can operate within secure and encrypted environments, so that your confidential documents remain protected.

Although API keys are easy to generate, you should manage them carefully. Improper usage can lead to unexpected costs or security risks.

API billing models are usually quite simple:

However, the monthly billing model can introduce risks if an API key is compromised.

Because API keys function similarly to a credit card number, a stolen key could generate thousands of requests and lead to large unexpected charges.

For this reason, best practices include:

Despite misconceptions that AI is always free, enterprise AI usage always involves operational costs, and unmonitored API usage could escalate quickly if misconfigured.

Another option for maximum confidentiality is deploying a private LLM within your organization’s own infrastructure.

In this model, the AI system runs entirely on local servers rather than through external APIs.

Because the model and data remain internal, confidential documents never leave the organization’s environment.

However, private LLM deployment requires significant computing resources, including high-performance GPUs, large system memory, and dedicated AI infrastructure.

This makes private LLM deployments more expensive and technically demanding than API-based solutions. But more secure if you’re dealing with highly confidential or sensitive documents.

Modern DMS platforms, particularly AI-enabled ECM systems like Dokmee, employ multiple layers of security to keep documents confidential.

First and foremost, Dokmee ECM has an API-based architecture that combines encryption and audit logging to process AI tasks while keeping data confidential.

Requests are transmitted securely, processed by the AI engine, and returned without exposing the underlying documents to third parties.

This architecture allows you to benefit from AI-driven automation while protecting sensitive data within the ECM environment.

By using APIs responsibly, you can make use of advanced AI tasks at a minimal cost per request while maintaining the strict confidentiality standards required for enterprise content management.

Dokmee also these 5 security measures to increase confidentiality:

RBAC enforces granular document-level permissions, ensuring that only authorized users can view, edit, or share content.

Departments and projects can have tailored access restrictions, and the principle of least privilege ensures that employees only access the files necessary for their roles.

AI confidentiality begins with strict access control policies inside the DMS, forming the foundation of secure enterprise content management.

Documents are encrypted during both storage and transmission.

Secure Sockets Layer (SSL) and Transport Layer Security (TLS) protect data moving across networks, while database-level encryption safeguards stored information. Encrypted backups and cloud storage further reduce risk.

Confidential AI relies on encrypted data pipelines to prevent exposure during processing, ensuring that even AI-driven workflows cannot compromise sensitive content.

AI automatically classifies documents based on content sensitivity, thanks to natural language processing and AI indexing.

Using advanced natural language processing and pattern recognition, confidential AI can detect proprietary information, personally identifiable data, or intellectual property, and apply automated policies to restrict access.

Confidential AI strengthens document security by automatically identifying and protecting sensitive content, reducing human error in tagging or handling confidential documents.

AI confidentiality transforms ECM security from reactive auditing to proactive threat detection.

By monitoring file access patterns, download volumes, and permission changes, AI can detect anomalies such as unusual mass downloads or access attempts outside normal working hours.

This allows administrators to respond immediately to potential breaches or misuse.

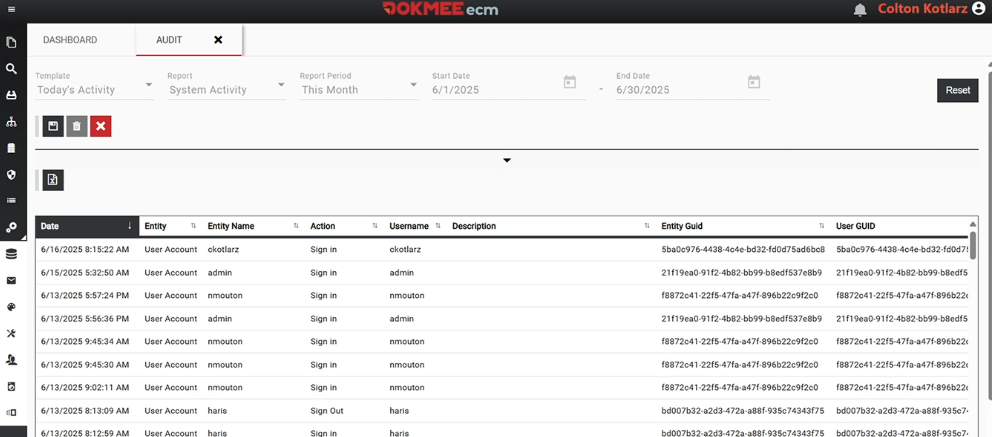

Full document histories, AI activity logs, and user-level accountability provide an additional layer of security.

Organizations can track every action performed on a document, from viewing and editing to sharing and deletion, which supports compliance reporting and internal investigations.

Furthermore, audit trails cannot be altered at any time.

Dokmee ECM is a prime example of an AI-ready enterprise content management platform that supports confidential enterprise document management.

Some of the security features include:

These features guarantee that organizations can implement confidential AI strategies without sacrificing usability or workflow efficiency.

AI confidentiality addresses your security challenges by protecting your documents and workflows from possible threats.

Intelligent automation keeps strict privacy and security standards.

By implementing granular access controls, encryption, AI-powered classification, secure processing environments, and comprehensive auditing, you can ensure your confidential documents remain protected.

Dokmee provides a modern, AI-ready framework to support these strategies, making confidential AI a strategic advantage in enterprise content management.

Schedule Your Free Demo—Anytime, Anywhere

Experience enterprise-grade ECM with zero hidden fees and instant ROI:

“Dokmee cut our retrieval time by 70%—we saw ROI in 45 days.”

Chad P., CTO